PSHMEM

I developed the PSHMEM package to provide several low-level classes that act as building blocks for operations that make use of one-sided MPI communication and node-shared memory. These include:

-

The MPIShared class which implements a data buffer stored in node shared memory which is transparently replicated across nodes so all processes in an MPI communicator can read values while minimizing the number of copies across a large MPI job.

-

The MPILock class, which implements a mutex lock across a communicator and passes ownership of the lock to communicator ranks in a first-come first-serve model.

-

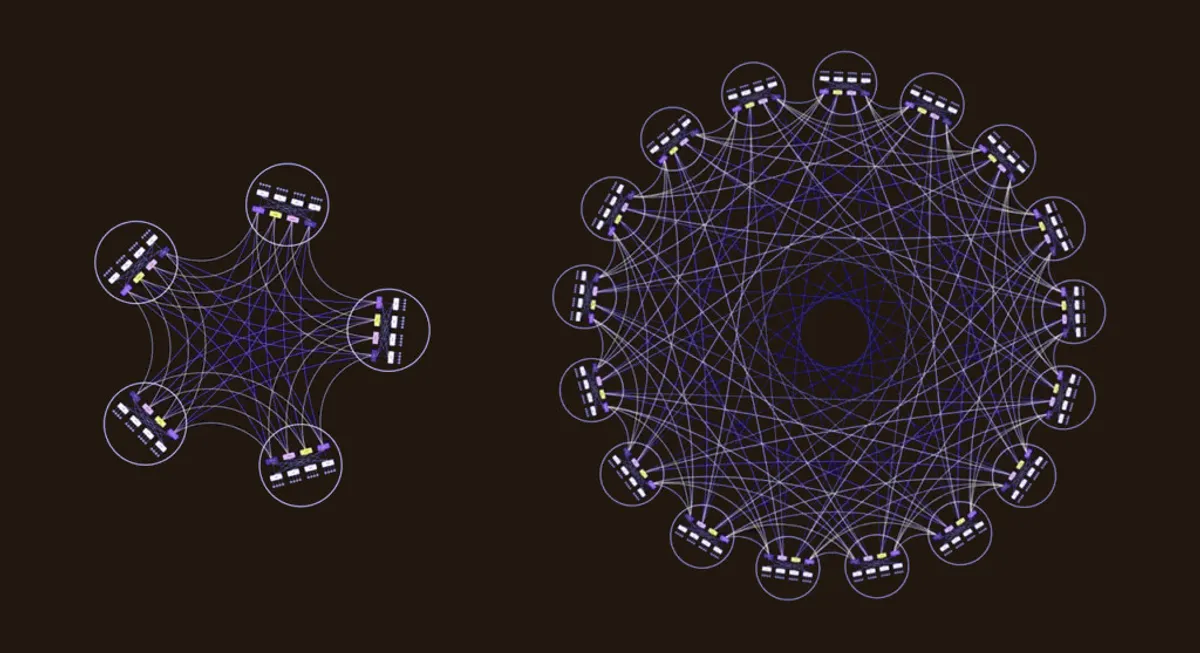

The MPIBatch class, which can be used to assign tasks to worker sub-communicators in a load-balanced way. The task states are stored in memory on one process and accessed using one-sided MPI communication without the need for a separate process to do coordination.

Pshmem is available both on PyPI and Conda-Forge.

Recent Work

The shared memory operations now make use of python standard library classes rather than low-level access to POSIX shared memory operations. The MPIBatch functionality was recently added and is already in use in some types of batch processing jobs for Simons Observatory.